In the digital landscape of 2026, simply uploading your company’s sensitive data into public cloud models is no longer a viable strategy. We are currently navigating a period of unprecedented artificial intelligence expansion, where AI has transitioned from a novel experiment into a daily operational necessity. However, for UK SMEs, this raw potential is often accompanied by significant apprehension. The true leaders in this space are those capable of adopting AI to drive kinetic business action without compromising their intellectual property or inflating their operational costs.

Traditional cloud-based AI has served as the foundational entry point for many businesses. However, the most forward-thinking organisations are now bridging the gap between innovation and security by integrating Privacy-first AI directly into their own local ecosystems. This evolution shifts the organisational posture from a risky reliance on third-party servers to a proactive, secure strategy that keeps confidential data safely on-premises.

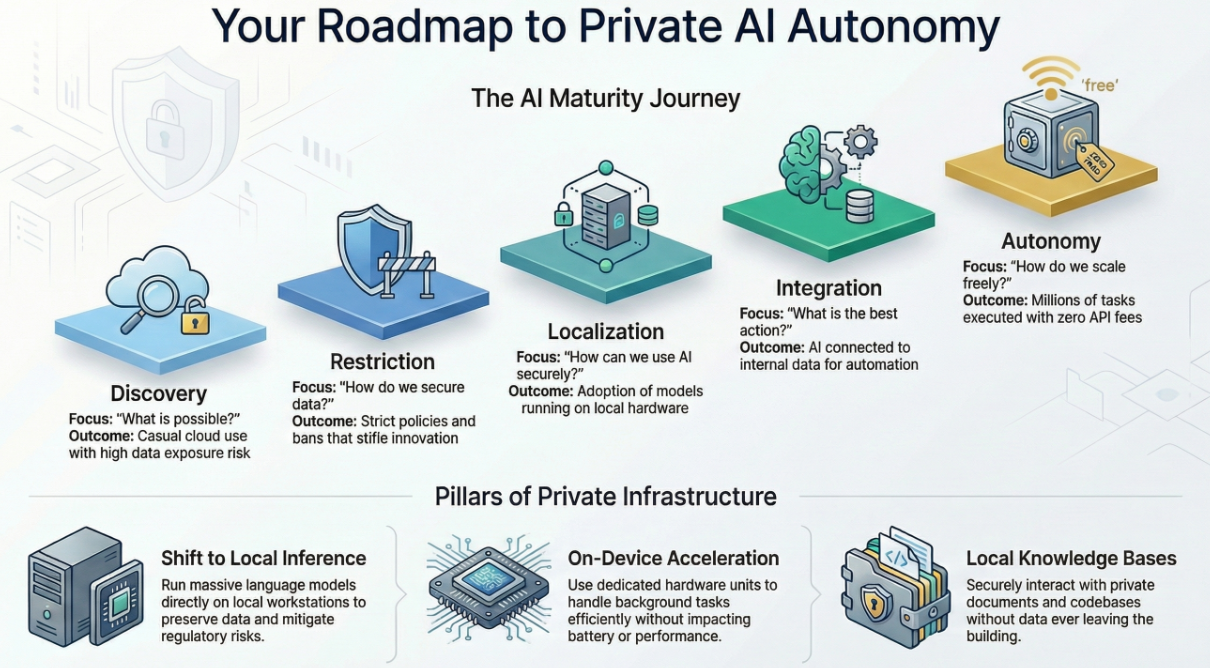

The Spectrum of AI Adoption: A Maturity Journey

To truly leverage Local AI Tools, enterprises must view their progress through the lens of an AI Maturity Model. This isn’t just about downloading new software; it is a structured progression in how an organisation handles intelligence:

Building a Resilient Local AI Architecture

Transitioning to private capabilities does not require discarding your AI ambitions; rather, it involves enriching them through a more sophisticated, self-hosted architecture.

Technical Integration: Marrying Privacy with Usability

A major hurdle in modern analytics and AI is making complex open-source models accessible to frontline staff. Many platforms now facilitate this through direct integration with intuitive graphical interfaces. As an example, a business user can open a desktop app which provides a chat interface, but operates 100% offline. This scenario would allow uses such as a marketing manager or HR director to generate sensitive reports instantly, removing the need for cloud processing and ensuring total confidentiality.

High-Impact Use Cases Across Sectors

The ability to process data securely offers a tangible edge across almost every business function. A few examples are:

Environmental and Operational Trade-Offs

While shifting to local infrastructure provides unparalleled data privacy and control, environmental and operational trade-offs of local AI adoption remain. The broader environmental footprint of data centres is immense, as generative AI workloads can consume seven or eight times more energy than typical computing tasks. Driven by this intense demand, global data center electricity consumption is expected to approach 1,050 terawatt-hours by the end of 2026; a volume that would place data centers fifth globally in energy usage, right between Japan and Russia. The technology also places an enormous strain on water resources, with facilities needing an estimated two liters of water for cooling per every kilowatt-hour of energy consumed. On an operational level, organizations moving away from the cloud to host their own models must absorb the "hidden costs" of local deployment. These include increased localized electricity usage, technical setup time, ongoing hardware maintenance, and the inability to automatically scale resources during unexpected spikes in demand. Furthermore, the upfront costs of the necessary hardware, particularly the consistently high prices for RAM and advanced GPUs, remain a significant consideration for widespread local AI adoption.

Governance, Privacy, and the Path Forward

You cannot scale intelligence on top of chaos, nor can you build trust on leaky infrastructure. Effective AI deployment requires a culture of security, where strict data boundaries guard against regulatory breaches. Furthermore, as privacy regulations tighten globally, pioneers are looking toward complete technological autonomy. The time to steer your business while paying recurring tolls to cloud providers is over. By unifying your daily operations with Local AI Tools, you empower every decision-maker to operate with absolute privacy, turning your secure data into a true engine of strategic, cost-effective growth.

Sources include:

On-Premise vs Cloud, Lenovo Press

Explained: Generative AI's environmental impact, Massachusetts Institute of Technology (MIT) News

CES 2026: The year of AI PCs?, ITPro