In the rapidly shifting landscape of artificial intelligence, Natural Language Processing (NLP) remains the primary engine of innovation, fundamentally changing how we interact with technology and how machines interpret our world. We have moved well beyond the era of simple word-matching into a phase where AI is beginning to "think" through problems, simulate physical realities, and operate as a collaborative partner rather than a mere tool. This article explores the most significant developments that are redefining the future of NLP and its impact on the enterprise.

Beyond Scaling: The Emergence of the 'Thinking' Model

For years, the industry focused on the "scaling hypothesis"; the idea that simply adding more parameters and data would lead to higher intelligence. However, as we approach 2026, the focus has shifted from raw size to reasoning capability. New architectures, exemplified by models like DeepSeek-R1 and OpenAI o1, utilise large-scale reinforcement learning to develop "Chain-of-Thought" processing. These models do not just predict the next likely word; they explore multiple logical paths, verify their own outputs, and even experience "Aha moments" when they identify and correct their own errors during a task. This transition from pattern-matching to deliberate reasoning is essential for high-stakes fields like mathematical research, legal analysis, and scientific discovery, where the "how" and "why" of an answer are as important as the answer itself.

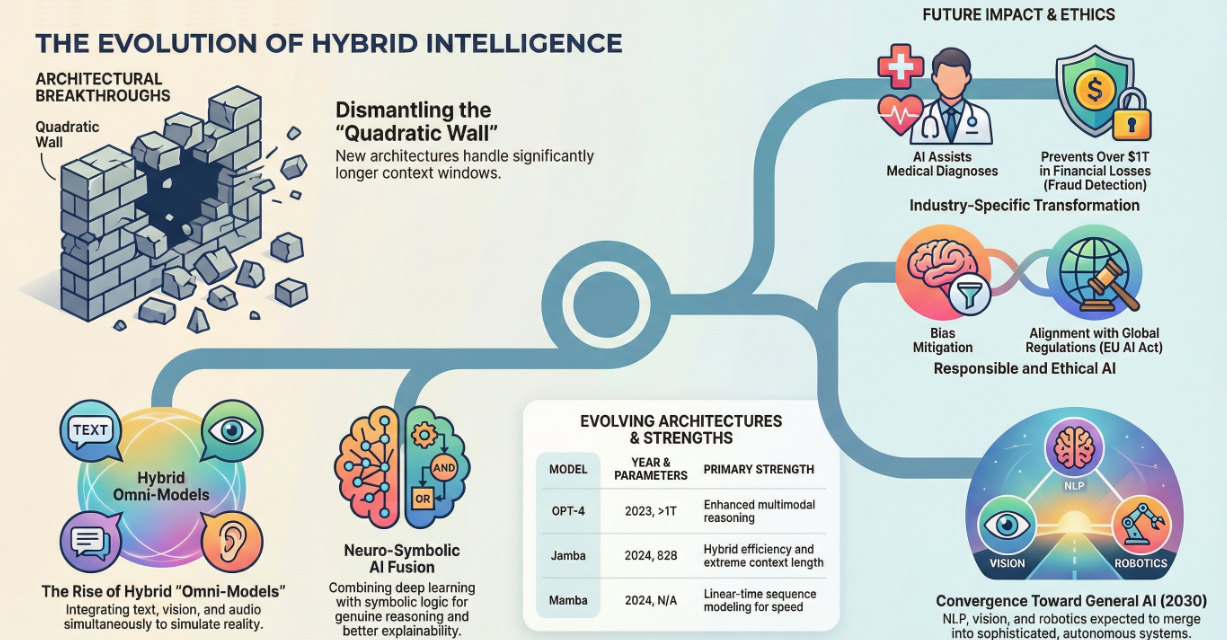

Breaking the 'Quadratic Wall': The Rise of New Architectures

While the Transformer architecture has been the undisputed standard since 2017, it faces a fundamental bottleneck: its computational cost grows quadratically as the input length increases. This "quadratic wall" makes processing massive documents or long conversational histories prohibitively expensive. In response, we are seeing a radical divergence in model design. State Space Models (SSMs), such as Mamba, offer near-linear scaling, allowing them to process context windows of hundreds of thousands of tokens with far greater efficiency than traditional Transformers. Furthermore, hybrid architectures like Jamba have emerged, interleaving Transformer layers with Mamba and Mixture-of-Experts (MoE) modules. This "best of both worlds" approach provides the precise reasoning of Transformers with the extreme throughput and memory efficiency of SSMs, enabling an effective context window of 256,000 tokens (roughly 800 pages of text) on consumer-grade hardware.

Efficiency and the Democratisation of Edge AI

The quest for efficiency is also driving a shift toward Edge AI and "TinyML," where sophisticated models run locally on smartphones and laptops rather than in the cloud. Innovations like 1-bit LLMs (e.g., Microsoft’s BitNet b1.58) represent a paradigm shift; by confining model weights to just three values (-1, 0, and 1), they reduce memory footprints tenfold and replace complex multiplications with simple addition, dramatically boosting energy efficiency. Optimisation is also occurring at the training level. The Muon optimizer has demonstrated the ability to achieve model quality comparable to the industry-standard AdamW while using only half the training compute. Such improvements ensure that advanced NLP is no longer reserved for those with massive data centres, but is increasingly accessible to smaller organisations and individual users.

The Sensory Paradigm: Multimodal Integration and World Models

The historical hierarchy of data, where text was the primary interface, is being inverted. Leading "omni-models" now treat text, audio, video, and screenshots as equal peers within a single context window. This allows enterprises to "sense" the world continuously; monitoring customer interactions across voice and screen in real-time to spot anomalies or coaching moments. An even more profound trend is the development of World Models. Unlike traditional NLP, which focuses on surface-level text, world models create internal representations of physical reality. Initiated by labs like Yann LeCun’s AMI Labs and Google DeepMind, these systems teach themselves how the world behaves; understanding mass, momentum, and spatial relationships. This grounding in reality is a critical step toward overcoming the "hallucination" problem and achieving Artificial General Intelligence (AGI).

From Retrieval to Knowledge Runtimes

The way businesses leverage their internal data is also evolving through Agentic RAG (Retrieval-Augmented Generation). We are moving past simple document retrieval toward autonomous "knowledge runtimes". In this framework, AI agents don't just find a document; they plan a research strategy, verify factual claims across multiple sources, and reflect on their own findings before presenting them. This creates a verifiable and governable infrastructure that is essential for meeting new regulatory standards, such as the EU AI Act, which takes effect in 2026.

Responsible Development and Safety Disentanglement

As NLP systems gain autonomy, the focus on safety has intensified. Recent research has identified a subtle trade-off: techniques that improve factual truthfulness can unintentionally weaken a model's safety guardrails. This occurs because the internal components responsible for "refusal" (safety) and "truthfulness" often overlap. Advanced mitigation strategies now use Sparse Autoencoders (SAEs) to disentangle these features, allowing developers to make models more accurate without compromising their ability to refuse harmful requests.

The Road Ahead

The trajectory of NLP suggests that by 2030, the subfields of language processing, computer vision, and robotics will have fully merged into unified systems capable of complex cognitive tasks with minimal human intervention. Success for organisations will lie in moving beyond "calculating text" to "simulating reality," applying these technologies thoughtfully to solve real business problems while ensuring legal validity and accountability.

Sources include:

The Future of Human-AI Communication and Language Models, Journal of Scientific and Engineering Research

Advances to Low-bit Quantization Enable LLMs on Edge Devices, Microsoft Research

What is Agentic RAG?, IBM