In the modern digital economy, data is no longer just a byproduct of business; it is the primary engine of competitive advantage. Yet, for many UK organisations, the bridge between raw data and actionable insight remains fragile, manual, and frustratingly slow. As global data creation is increasing at a staggering rate, traditional "bespoke" methods of managing analytics are failing to keep pace. Past surveys of major analytics projects found that 80 percent of companies’ time is spent on tasks such as preparing data. To solve this, forward-thinking leaders are turning to DataOps, a methodology that applies the rigor of software engineering to the world of data. By automating workflows and fostering collaboration, DataOps allows companies to convert data from "potential energy" into "kinetic business action".

What is DataOps?

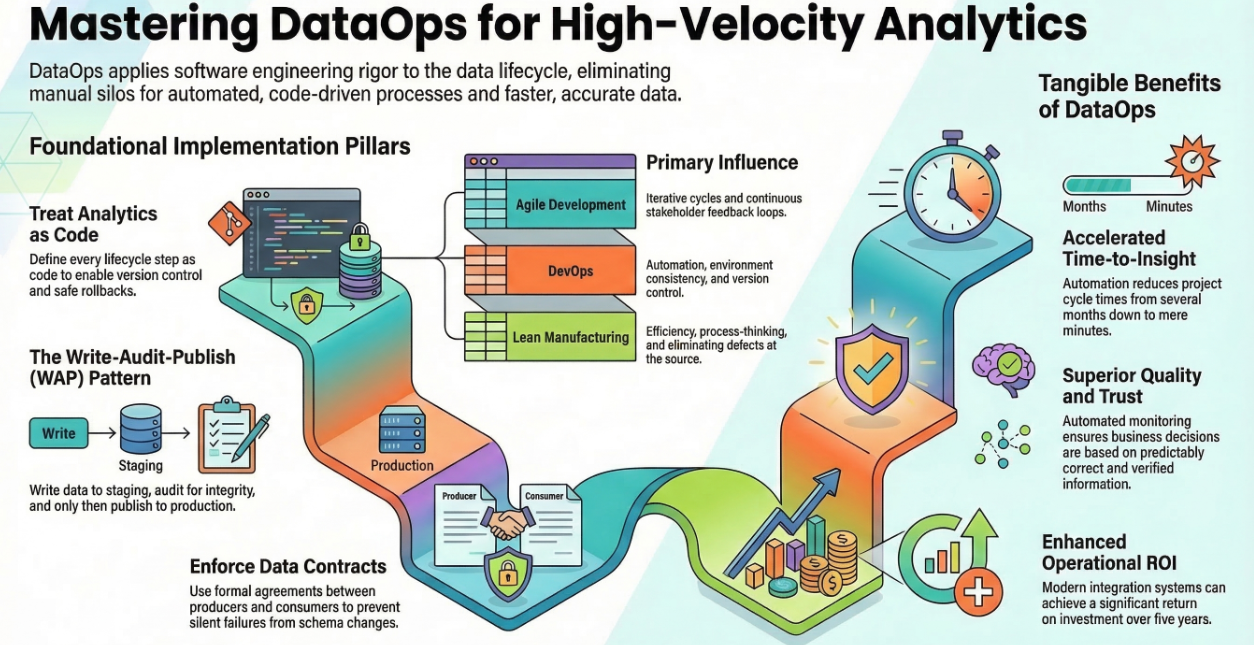

What is DataOps exactly? It is a collaborative data management strategy that combines the principles of Agile development, DevOps, and Lean manufacturing to streamline the entire data lifecycle. Its primary objective is to reduce "cycle time"; the duration between the start of an analytics project and the delivery of a finished, ready-for-use report or model. While it borrows heavily from software engineering, it is important to understand the nuance of DataOps vs DevOps. While DevOps focuses on the rapid release of code and software, DataOps manages the dynamic and often unpredictable nature of data itself, ensuring its quality, reliability, and governance across disparate systems.

The Tangible Benefits of a DataOps Strategy

Adopting DataOps best practices offers far more than just technical efficiency; it is a fundamental business strategy that delivers four core advantages:

Core DataOps Best Practices for 2026

For UK SMEs looking to establish excellence, implementing DataOps requires a focus on these four foundational pillars:

The Business Case: Speed, Quality, and ROI

For businesses, DataOps is not merely a technical upgrade, it is a financial strategy. By shifting from a reactive "firefighting" posture to a proactive, automated one, organisations can see a significantly faster time to actionable insights. By starting small, perhaps with a single dataset and applying these best practices, your organisation can turn data into a true engine of strategic growth.

Sources include:

What is DataOps?, Hewlett Packard

How companies can use DataOps to jump-start advanced analytics, McKinsey

How Virgin Media O2 uses data contracts to enable trusted data and scalable AI products, Google